Mini Artifacts

AI chats lose context the moment you scroll up. What if conversation-aware cards could persist, evolve, and dissolve to keep planning visible?

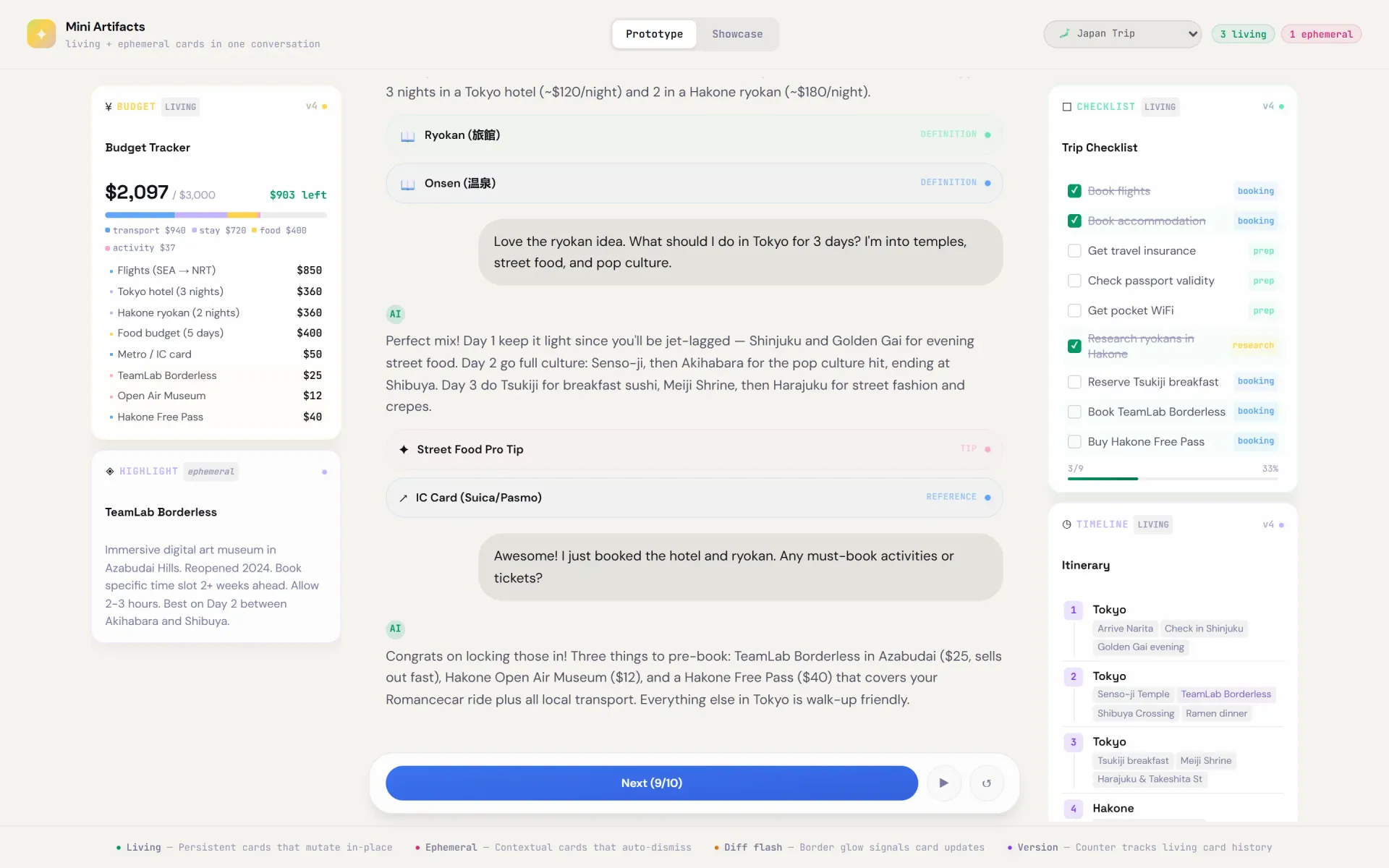

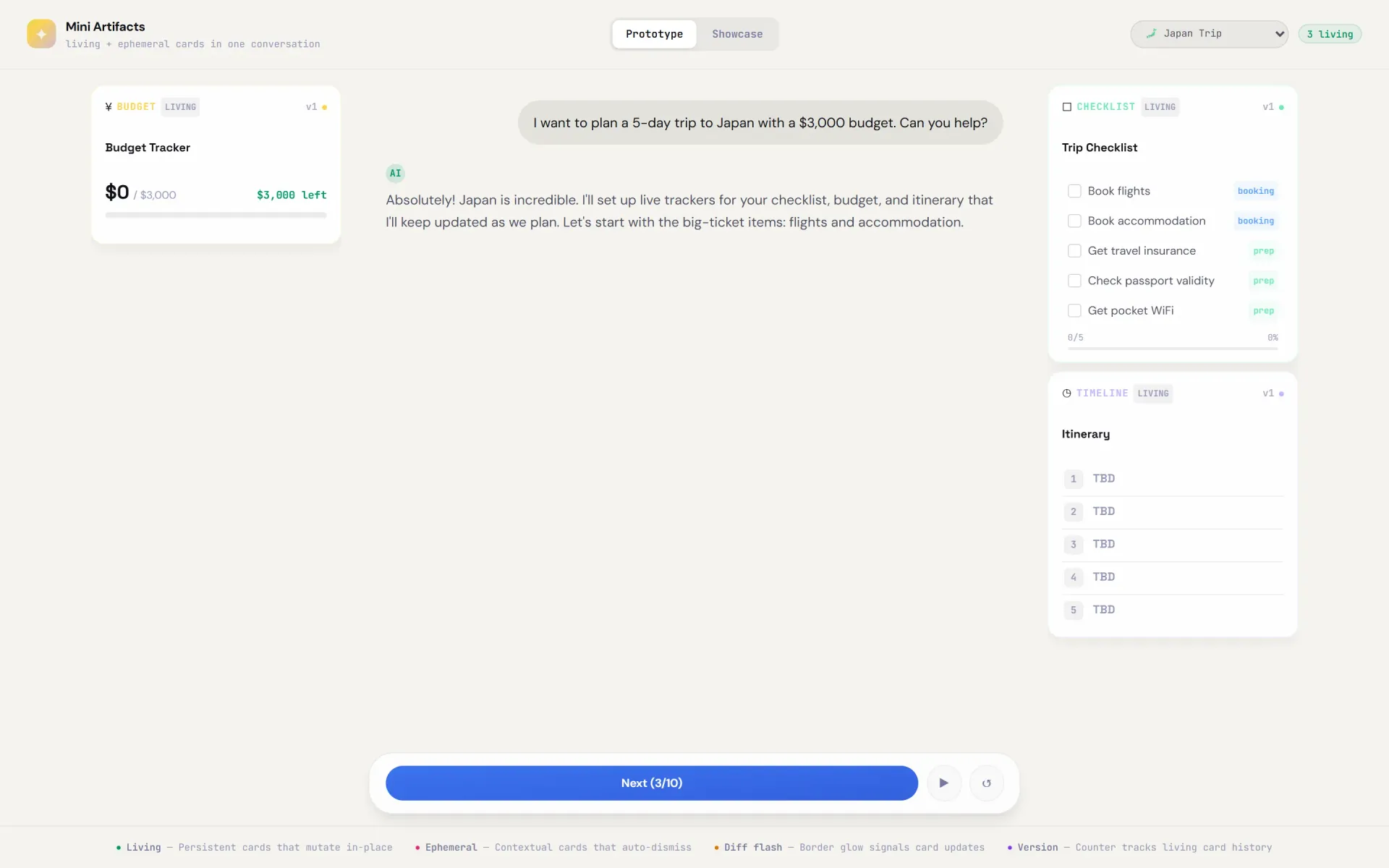

Three-column layout: budget tracker and ephemeral cards (left), conversation (center), checklist and timeline (right)

Try the prototype

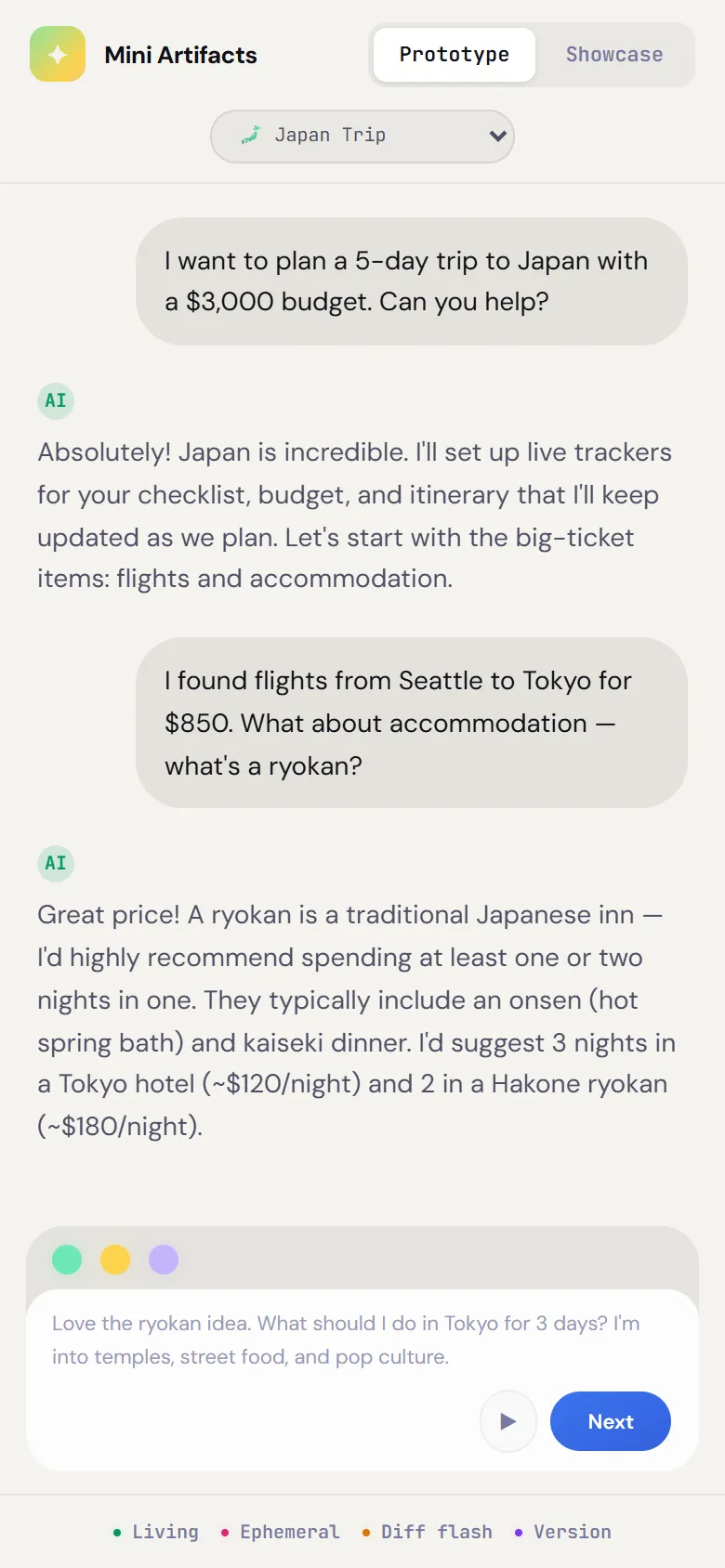

Click “Next” to advance the conversation

The Problem

Chat buries structured information

Across ChatGPT, Claude, and Gemini, the same pattern emerges: when users plan complex tasks through chat, structured information — checklists, budgets, timelines — gets lost in the message stream.

The Idea

Give AI a second output channel

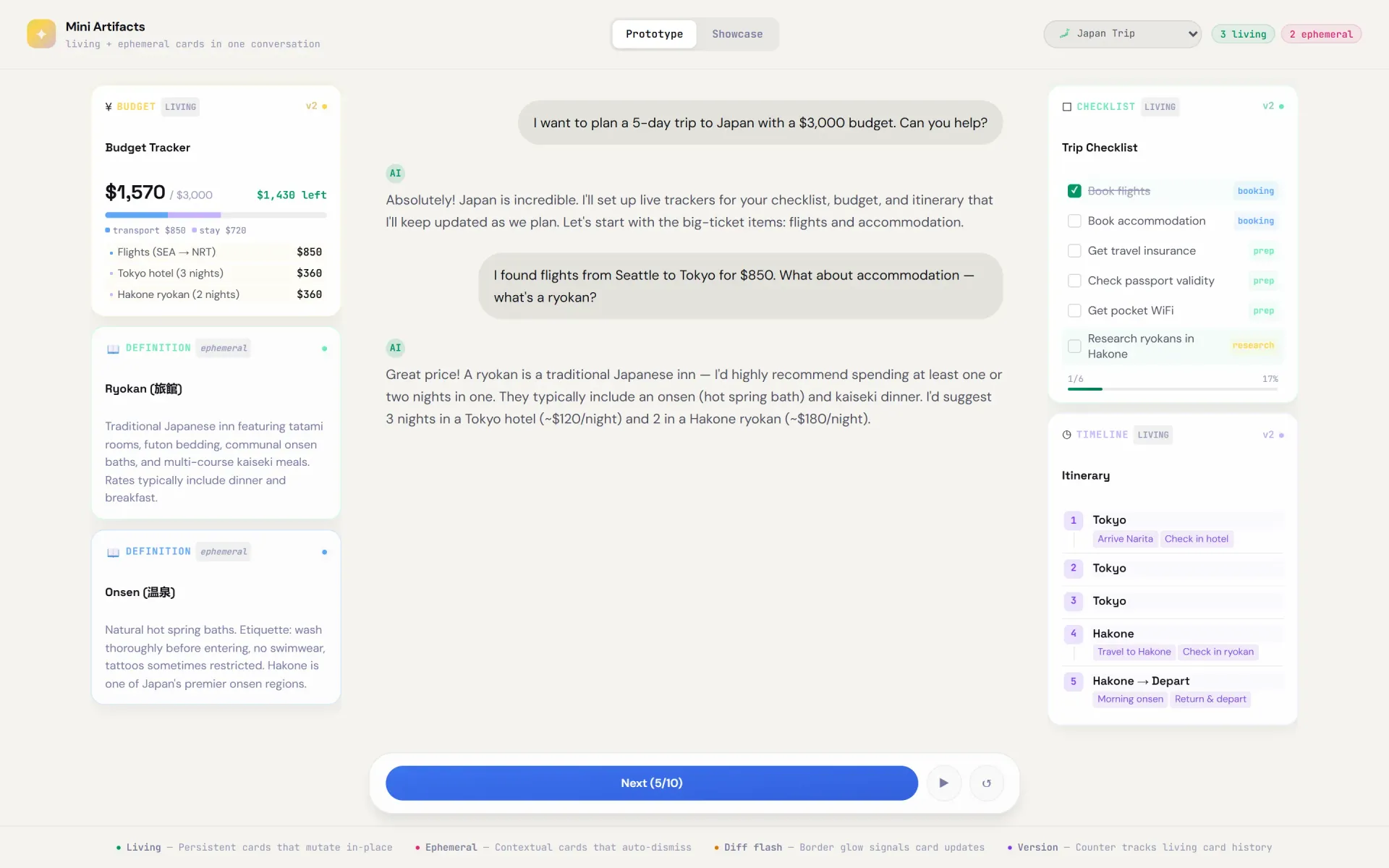

Instead of only text in a stream, the AI gets cards it can spawn, mutate, and dismiss — living alongside chat in a three-column layout. The key insight: not all context has the same lifespan. This led to two card types.

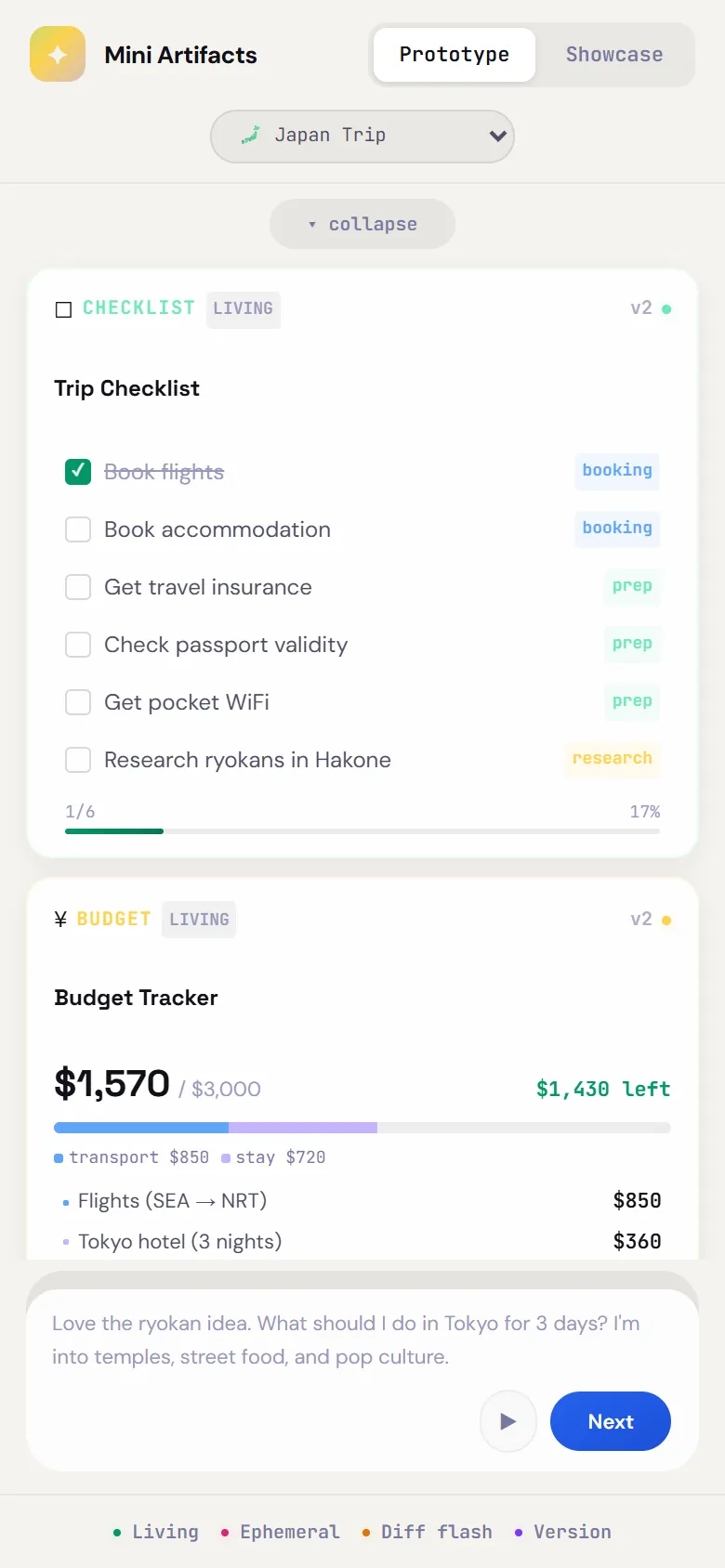

Living cards spawn as the AI responds — checklist, budget, and timeline appear simultaneously

Ephemeral cards appear with contextual info — here, definitions for “ryokan” and “onsen”

Scenarios

Seven domains, one pattern

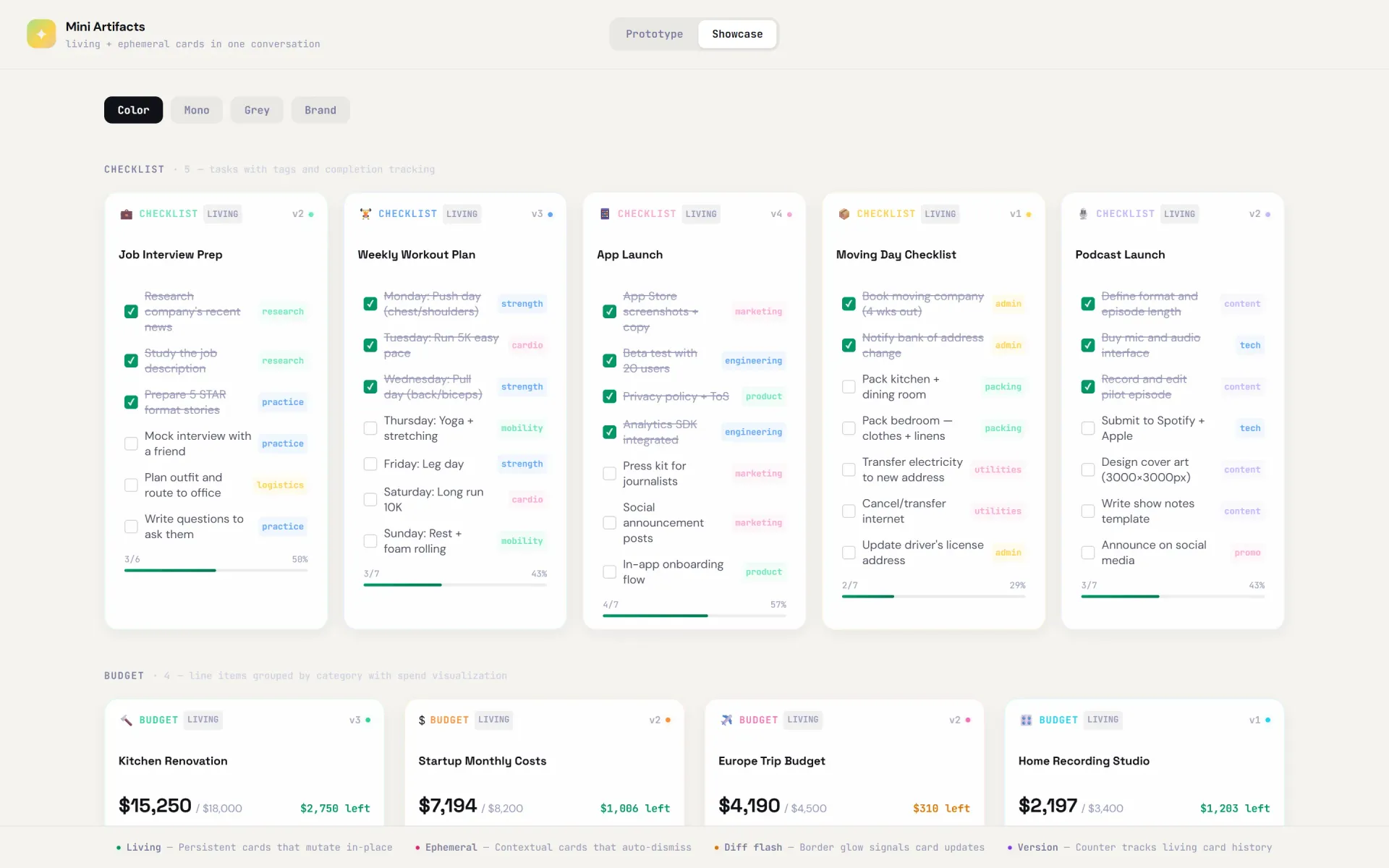

To test whether the card system generalizes, I built scripted demos across different planning domains. Each spawns the same card types with domain-specific content.

The showcase page displays all card types with sample data — verifying that the system generalizes across domains

Design Decisions

Each choice isolates a variable

Staggered spawn timing

200ms stagger between cards. Testing whether choreographed entry reduces perceived complexity versus simultaneous spawn.

Update flash system

1.4s glow when a card mutates. Testing whether peripheral attention cues are enough to register state changes without reading.

Viewport-locked layout

Cards and controls never leave view. Testing the hypothesis that persistent visibility eliminates context-switching scrolls.

Color-coded categories

Per-demo color systems for tags and budget categories. Testing whether color-scanning outperforms text-scanning for structured data.

Responsive mobile layout

On mobile, cards collapse to colored indicator dots above the prompt. Tap to expand. Ephemeral cards flow inline with chat. Testing whether the spatial model translates to constrained viewports.

Mobile adaptation

Chat with card dots

Tap dots to expand

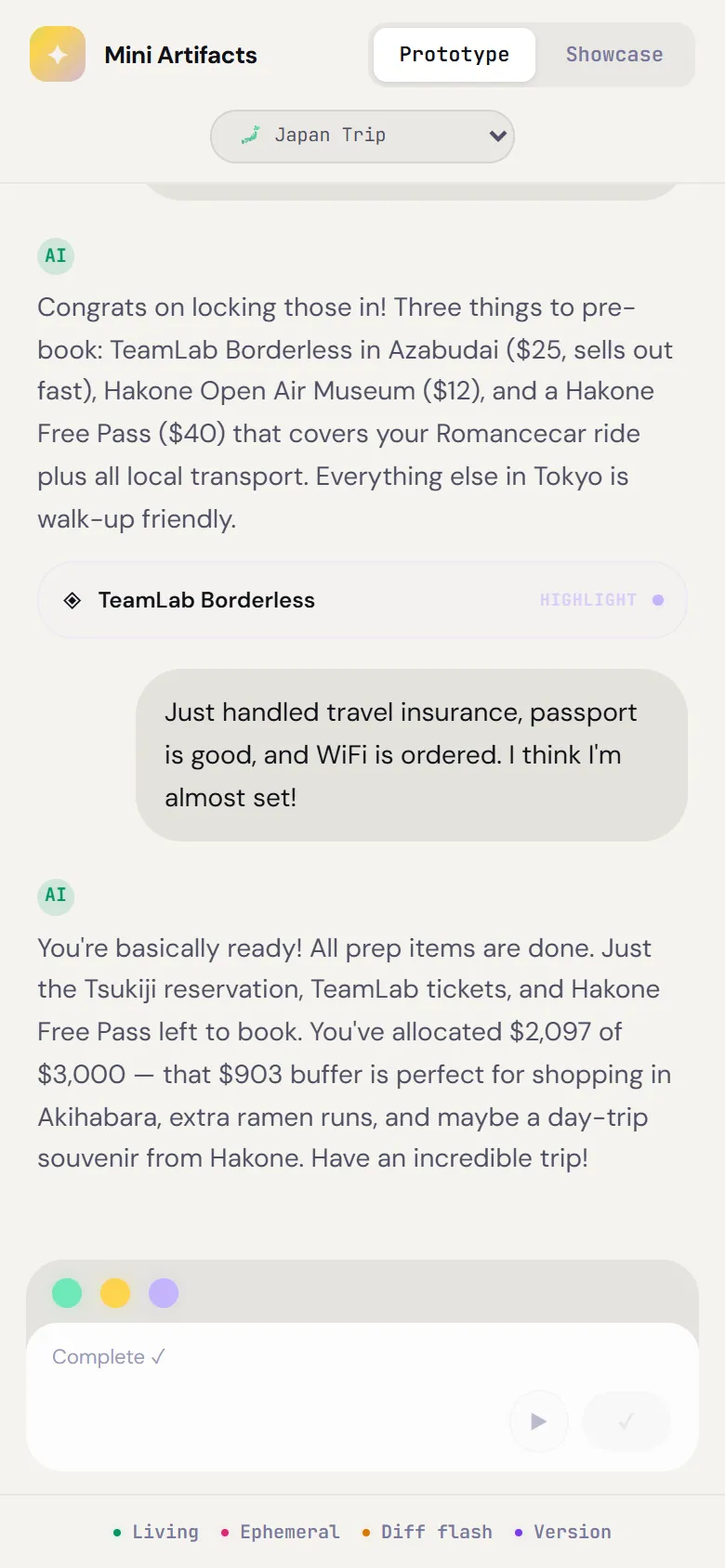

Complete conversation

Validation

How to prove it works

A prototype proves feasibility, not value. This is the evaluation framework to determine whether the spatial card model actually helps — or just looks interesting.

Core hypothesis: AI-managed spatial cards reduce cognitive load and improve task outcomes in multi-step planning conversations, compared to standard linear chat.

A/B test structure

What to measure

Kill criteria

What's Next

Prototype built, needs validation

The prototype is functional across seven domains. It proves the interaction model is technically viable and generalizable. What it hasn't proven yet: whether users actually benefit from it.

Next step is running A/B tests with real participants — measuring task comprehension, scroll distance, and cognitive load (NASA-TLX) — to find out if spatial cards are a useful pattern or just an interesting one.