Tale

2M+ Americans with aphasia can think clearly but can't get the words out. Designed an AI communication aid that turns fragmented speech into complete conversations — validated with neurologists and speech clinics.

Overview

I led design, research, and brand strategy for Tale — an AI-powered speech assistance app for people with mild-to-moderate aphasia. I designed the UX paradigm for how patients interact with the system during real conversations, conducted expert interviews with neurologists and speech clinics, and shaped the product vision that took us through two startup competitions.

The Problem

2 million Americans can think clearly but can't say what they mean

Aphasia is a language disorder caused by stroke or brain injury. People with aphasia know exactly what they want to say — their intelligence is intact — but the words won't come out, or the wrong words come out. Imagine knowing the answer to every question but being unable to speak it.

Speech therapy costs $2,184–$7,392 per year on top of medical expenses. There are only 3,560 speech-language pathologists who specialize in aphasia — for 2 million patients. Existing assistive devices focus on severe cases, leaving the mild-to-moderate population underserved. These are people who can have conversations — they just need the right word at the right time.

Research

Three expert consultations that shaped the product

February 2024 · Swedish Hospital, Seattle

Dr. William Lou, Neurologist

Validated the market need and confirmed our target user group. Advised measuring improvement by “raw number of words gained” rather than percentages — a more meaningful clinical metric. Recommended working with medical practitioners for go-to-market rather than direct-to-consumer only.

“There is a need for it in the current market for aphasia patients.”

February 2024 · University of Washington

Prof. Diane, Communication Sciences & Disorders

Provided critical linguistic insights: misused words follow predictable patterns across patients. Suggested category-based conversation boards (food, sports, family) rather than open-ended conversation contexts — patients are more likely to engage in structured topic areas.

“Misused words are normally similar across patients — this is a pattern you can design for.”

April 2024 · UW Speech Clinic

UW Speech Clinic Collaboration

Collaborated on feature refinement. Tested word retrieval effectiveness for stuttering patterns. Explored using the patient's own voice for familiarity, and pairing visual cues (pictures) with word retrieval suggestions. Identified the “chicken and egg” problem: the AI needs patient data to work well, but patients won't use it until it works well.

Key Insights

What research revealed

The Solution

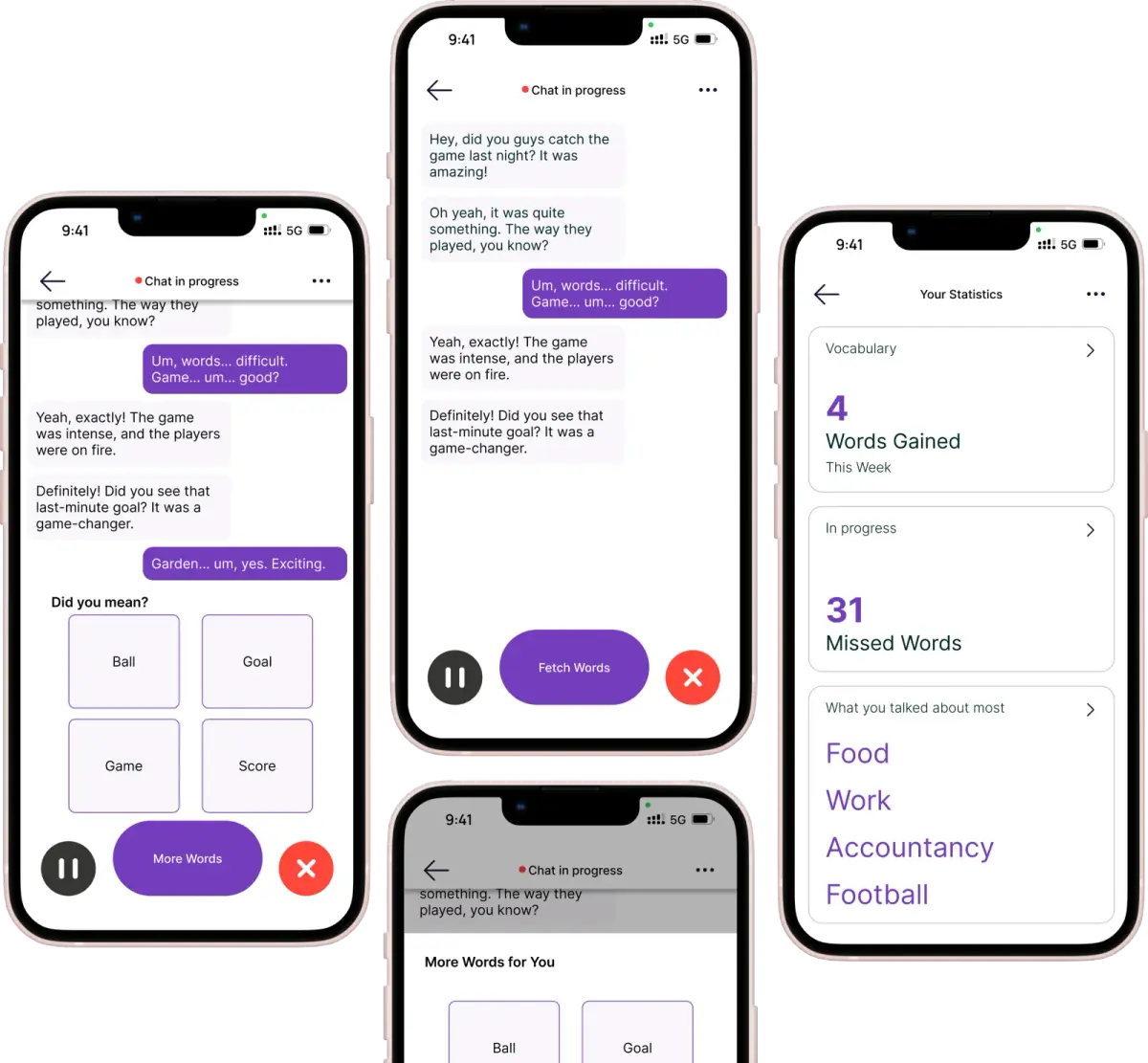

Tale: context-aware speech assistance

An AI-powered mobile app that listens to conversations in real-time, detects when a user is struggling to find a word, and suggests contextually appropriate completions — using the patient's own voice for familiarity. Not a replacement for speech therapy, but a companion that makes every conversation a practice opportunity.

UX Paradigm

Designing for people who can't tell you what's wrong

The core UX challenge: our users struggle with language itself. They can't easily articulate feedback, describe frustrations, or navigate complex interfaces. Every design decision had to account for cognitive load, emotional state, and physical limitations.

Category-based context, not open-ended

Instead of trying to understand arbitrary conversations, we designed around topic categories — food, family, sports, daily activities. This gave the AI a bounded context to work within and matched how speech therapy sessions are actually structured.

Visual + audio dual cues

Word suggestions are paired with images. When the patient is trying to say “coffee,” they see the word, hear it in their own voice, and see a picture — three pathways to retrieval instead of one.

The patient's own voice

Hearing a stranger's voice say the word you're struggling with feels clinical. Hearing your own voice say it correctly — recorded before or early after the stroke — creates a connection between the word and the self. This was a key design decision from our UW Speech Clinic collaboration.

Minimal, color-coded interface

Large tap targets, high contrast, minimal text. Color-coding for states (practicing, achieved, needs work) so patients can understand their progress at a glance without reading.

Validation

Two competitions, real feedback

Hollomon Health Innovation Challenge 2024

Finalist

Selected as finalists among healthcare innovation teams. Presented to a panel of healthcare investors and industry experts who validated the market need and technical approach.

Dempsey Startup Competition 2024

Investment Round

Advanced to the investment round — the stage where judges evaluate startups for actual funding. Pitch focused on the $14.6B speech impairment market and our differentiation through context-aware AI.

Outcome

What happened and why we stopped

Tale validated the problem, the market, and the approach — through expert interviews, clinical collaboration, and two competitive rounds. The prototype demonstrated word retrieval effectiveness for stuttering patterns.

We stopped because we hit a fundamental blocker: insufficient clinical speech data to train a model that could reliably detect struggle patterns and generate contextually accurate suggestions. The “chicken and egg” problem we identified with the UW Speech Clinic proved to be the critical constraint — you need patient data to build the AI, but patients won't use a tool that doesn't work well yet.

The team decided to shut down. The research, the UX paradigm, and the clinical relationships remain valuable — this is a problem that will be solved, and the groundwork we laid contributed to that future.

Reflections

What I learned

Designing for vulnerability requires humility

Our users couldn't articulate what was wrong with our design. Standard usability testing doesn't work when language itself is the barrier. I learned to observe behavior, not ask questions — and to design interfaces that assume nothing about the user's current cognitive state.

Clinical validation ≠ product-market fit

Three experts told us the market needed this. Two competitions validated the idea. But without the training data to make the core AI work reliably, clinical validation alone couldn't carry us to product. The hardest part of AI products isn't the model — it's the data.

The UX paradigm outlives the product

The design decisions — category-based context, dual visual+audio cues, patient's own voice, privacy-first processing — are transferable to any speech assistance tool. Even though Tale stopped, the interaction patterns I designed are valid for whoever solves this next.